OServer C++ library

v3.1.7

Table of contents

- Overview

- How to start

- Versions

- Library files

- OServer class description

- Json configuration string

- Callback mechanism

- Simple example

- Compliance Test Template

- Running ONVIF Conformance Tests

- Test application

- Dependencies

- Build and connect to your project

Disclaimer

ONVIF® is a registered trademark of ONVIF, Inc. The ONVIF name is used here only in a descriptive manner to indicate that this library is intended to implement aspects of the ONVIF® specifications for interoperability. This project is not affiliated with, endorsed by, or sponsored by ONVIF, Inc. or its members. No ONVIF® conformance or certification is claimed. Any conformance claim can only be made by a finished product that has successfully completed ONVIF’s official conformance process and received a valid Declaration of Conformance (DoC) from ONVIF. No claim is made to the exclusive right to use “ONVIF” apart from the technical reference shown in this library’s documentation and code.

Overview

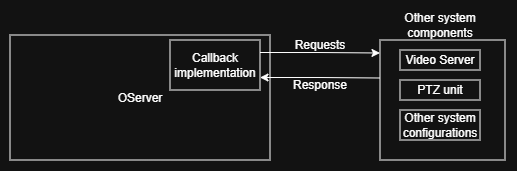

OServer is a C++ library that provides a server implementation based on the ONVIF® specifications. The library supports Linux only. It is designed for applications that require server functionality implementing the ONVIF® specifications, such as video surveillance systems, IP cameras, and other devices. The OServer library has the following dependencies: nlohmann JSON library (source code included), zlib (linked, ZLIB license), and openssl (linked, Apache 2.0 license). The repository also includes a Compliance Test Template intended to help implement Profile S and Profile T features for ONVIF conformance testing. The Compliance Test Template requires additional dependencies: VStreamerMediaMtx (provides an RTSP video server, source code included), CppHttpLib (provides an HTTP server required for ONVIF® Profile S, v3.14.0 source code included, MIT license) and VCodecGst (provides GStreamer-based video codec support, source code included). These dependencies provide video management and streaming capabilities used by the Profile S template. The library manages ONVIF® requests and responses using a callback mechanism. The user defines a global callback, and the library invokes this callback with request/response type parameters. Configuration of OServer is done via a JSON string. The OServer library requires the C++17 standard. Note: Claiming ONVIF® conformance for a product requires completion of ONVIF’s official conformance process and a valid DoC.

How to start

To start the ONVIF® server using the OServer library, you need to create an application that initializes and starts the server. The application should include the necessary headers, define a callback function to handle ONVIF® requests, and use the OServer class to manage the server lifecycle. It is imporant to note that the OServer library does not provide real video streaming or PTZ control functionality. You will need to implement these features in your application and use the callback function to handle ONVIF® requests related to these features.

As shown in the diagram above, OServer receives ONVIF® requests from clients and forwards them to the callback function. The callback function processes the requests, interacts with your camera hardware or software to apply settings or retrieve information, and returns responses back to the clients through the OServer library.

Versions

Table 1 - Library versions.

| Version | Release date | What’s new |

|---|---|---|

| 1.0.0 | 15.12.2024 | - First version. |

| 1.1.0 | 23.07.2025 | - Profile S support added. |

| 2.0.0 | 23.07.2025 | - Interface updated. </br> - Profile S template added. |

| 2.0.1 | 27.07.2025 | - VStreamerMediaMtx submodule update in example. |

| 2.0.2 | 10.08.2025 | - VStreamerMediaMtx submodule update in example. |

| 2.0.3 | 06.10.2025 | - VStreamerMediaMtx submodule update in example. |

| 3.0.0 | 10.10.2025 | - Class name changed to OServer. |

| 3.0.1 | 15.11.2025 | - Updated submodules for test application. |

| 3.0.2 | 21.11.2025 | - Update Profile S template to use latest VStreamerMediaMtx. |

| 3.0.3 | 28.11.2025 | - VStreamerMediaMtx submodule updated for template application. |

| 3.1.0 | 20.02.2026 | - Profile T support added to Compliance Test Template. </br> - VStreamerMediaMtx submodule updated for template application now mediamtx executable is embedded. |

| 3.1.1 | 01.03.2026 | - VStreamerMediaMtx submodule updated for template application. |

| 3.1.2 | 22.03.2026 | - VStreamerMediaMtx submodule updated for template application. |

| 3.1.3 | 05.04.2026 | - VStreamerMediaMtx submodule updated for template application. |

| 3.1.4 | 14.04.2026 | - VStreamerMediaMtx submodule updated for template application. |

| 3.1.5 | 06.05.2026 | - VStreamerMediaMtx submodule updated for template application. |

| 3.1.6 | 07.05.2026 | - A set of fixes for Profile T conformance support. |

| 3.1.7 | 13.05.2026 | - VStreamerMediaMtx and VCodecGst submodules updated for test application. |

Library files

The library is supplied as source code only. The user is provided with a set of files in the form of a CMake project (repository). The repository structure is shown below:

CMakeLists.txt --------------- Main CMake file of the library.

src -------------------------- Folder with library source code.

CMakeLists.txt ----------- CMake file of the library.

OServer.cpp -------------- C++ implementation file.

OServer.h ---------------- Main library header file.

OServerVersion.h --------- Header file with library version.

OServerVersion.h.in ------ CMake service file to generate version file.

nlohmann_json.hpp -------- Header file with nlohmann JSON library.

... ---------------------- Other backend files.

example ---------------------- Folder for example application files.

CMakeLists.txt ----------- CMake file of example application.

main.cpp ----------------- Source C++ file of example application.

DefaultConfigContent.h --- Header file with default configuration content.

complianceTestTemplate ------- Folder for ONVIF Compliance Test Template files (Profile S & T).

3rdparty ----------------- Folder with third-party libraries.

CMakeLists.txt ------- CMake file to include third-party libraries.

VStreamerMediaMtx ---- Folder with VStreamerMediaMtx source code.

CppHttpLib ----------- Folder with CppHttpLib source code.

VCodecGst ------------ Folder with VCodecGst source code.

images ------------------- Folder with JPEG images for snapshots (camera_1.jpg, camera_2.jpg).

CMakeLists.txt ----------- CMake file of Compliance Test Template.

Config.h ----------------- Header file with global configuration constants and variables.

DefaultConfigContent.h --- Header file with embedded default JSON configuration.

haproxyConfigContent.h --- Header file with embedded HAProxy configuration template.

main.cpp ----------------- Source C++ file of Compliance Test Template application.

Ptz.cpp ------------------ Source C++ file with PTZ emulator implementation.

Ptz.h -------------------- Header file with PTZ emulator class declaration.

ServerCallback.cpp ------- Source C++ file with ONVIF® server callback example.

ServerProfileS.json ------ Example JSON configuration file for Profile S/T testing.

Utils.cpp ---------------- Source C++ file with utility functions.

Utils.h ------------------ Header file with utility function declarations.

OServer class description

OServer class declaration

The OServer class is declared in the OServer.h file. Class declaration:

/// ONVIF® server class.

class OServer

{

public:

/// Type definition for the ONVIF® message handler callback function.

using onvif_handler_t = ONVIF_RET (*)(ONVIF_DEVICE *, ONVIF_MSG_TYPE, ...);

/// Get string of current library version.

static std::string getVersion();

/// Initialize the ONVIF® server with the JSON configuration string

/// and a global handler callback.

bool init(const std::string& jsonConfig, onvif_handler_t callback);

/// Initialize the ONVIF® server with the function pointers to initialize

/// the supported analytics rules and modules. This method is OPTIONAL.

void initAnalytics(void (*rulesInit)(onvif_SupportedRules *),

void (*modulesInit)(onvif_SupportedAnalyticsModules *));

/// Start the ONVIF® server.

bool start();

/// Stop the ONVIF® server.

bool stop();

};

getVersion method

The getVersion() method returns a string of the current OServer class version. Method declaration:

static std::string getVersion();

The method can be used without an OServer class instance:

cout << "OServer version: " << OServer::getVersion();

Console output:

OServer version: 3.1.6

init method

The init(…) method initializes the ONVIF® server. Method declaration:

bool init(const std::string& jsonConfig, onvif_handler_t callback);

| Parameter | Value |

|---|---|

| jsonConfig | A JSON string with the configuration for the ONVIF® server. |

| callback | The callback function to handle ONVIF® requests. See Callback mechanism for more details. |

Returns: TRUE if the initialization was successful or FALSE if not.

initAnalytics method

The initAnalytics(…) method initializes ONVIF® analytics. Analytics functionality is not required by Profile S, it is an optional feature. It should be used for Profile M. Method declaration:

void initAnalytics(void (*rulesInit)(onvif_SupportedRules *),

void (*modulesInit)(onvif_SupportedAnalyticsModules *));

| Parameter | Value |

|---|---|

| rulesInit | The function to initialize ONVIF® supported rules. |

| modulesInit | The function to initialize ONVIF® supported analytics modules. |

start method

The start() method starts the internal HTTP and WebSocket servers, and runs different message parsers and processes. The method returns the result of the operation. Method declaration:

bool start();

Returns: TRUE if the server was successfully started or FALSE if not.

stop method

The stop() method stops the operation of the internal HTTP and WebSocket servers, different message parsers and processes, and frees the used resources. Method declaration:

bool stop();

Returns: TRUE if the server was successfully stopped or FALSE if not.

JSON configuration string

The OServer library uses a JSON configuration string to initialize the server. The JSON string contains the configuration, including device information, supported services, and capabilities. The JSON string is passed to the init(…) method of the OServer class. By using a JSON configuration string, the user can define different configurations, such as the number of cameras, their names, and other parameters. It is difficult to provide a complete template for the JSON configuration string because it depends on the specific use case and the features that are required. However, the complianceTestTemplate/ServerProfileS.json file contains an example JSON configuration that can be used to initialize a server with two cameras and one PTZ controller. This configuration is intended to support Profile S and Profile T features for ONVIF conformance testing and does not, by itself, constitute an ONVIF® conformance claim.

A simple form of the JSON configuration string is shown below:

{

"config": {

"log_enable": 1,

"log_level": 5,

"device": [

{

"dhcp": 0,

"server_ip": "127.0.0.1",

"interface_ip": "172.23.219.129",

"interface_port": 80,

"http_enable": 1,

"http_port": 10005,

"https_enable": 0,

"https_port": 1443,

"cert_file": "ssl.ca",

"key_file": "ssl.key",

"http_max_users": 16,

"need_auth": 1,

"EndpointReference": "testEndpoint",

"information": {

"Manufacturer": "YourCompany",

"Model": "IPCamera",

"FirmwareVersion": "2.4",

"SerialNumber": "123456",

"HardwareId": "1.0"

},

"user": [

{

"username": "admin",

"password": "admin",

"userlevel": "Administrator"

},

{

"username": "user",

"password": "123456",

"userlevel": "User"

}

],

"RemoteUser": [

{

"username": "remote_user",

"password": "remote_password",

"UseDerivedPassword": false

}

],

"SystemDateTime": {

"TimeZone": {

"TZ": "UTC0"

},

"DateTimeType": "Manual",

"DaylightSavings": true

},

"network": {

"NetworkProtocols": [

{

"Name": "HTTP",

"Enabled": true,

"Port": [80, 8080, 10002]

},

{

"Name": "HTTPS",

"Enabled": false,

"Port": [443, 8443]

},

{

"Name": "RTSP",

"Enabled": true,

"Port": [554, 8554]

}

],

"DNSInformation": {

"FromDHCP": true,

"SearchDomain": [

"example.com",

"example.org"

],

"DNSManual": [

{

"Type": "IPv4",

"IPv4Address": "10.10.10.4"

},

{

"Type": "IPv4",

"IPv4Address": "10.10.10.5"

},

{

"Type": "IPv4",

"IPv4Address": "10.10.10.6"

},

{

"Type": "IPv4",

"IPv4Address": "10.10.10.7"

}

]

},

"NTPInformation": {

"FromDHCP": false,

"NTPManual": [

{

"Type": "IPv4",

"IPv4Address": "192.168.1.14"

},

{

"Type": "IPv4",

"IPv4Address": "192.168.1.14"

},

{

"Type": "DNS",

"DNSname": "time.example.com"

},

{

"Type": "DNS",

"DNSname": "time.example.org"

}

]

},

"HostnameInformation": {

"FromDHCP": false,

"RebootNeeded": false

},

"NetworkGateway": {

"IPv4Address": [

"192.168.1.1",

"192.168.1.2"

]

},

"DiscoveryMode": "Discoverable",

"DynamicDNSInformation": {

"Type": "NoUpdate",

"Name": "example.com",

"TTL": 3600

},

"ZeroConfiguration": {

"InterfaceToken": "NetworkInterfaceToken_1",

"Enabled": true

}

},

"HashingAlgorithm": {

"@md5": 1,

"@sha256": 1

},

"VideoSources": [

{

"@token": "Camera1",

"Framerate": 30.0,

"Resolution": {

"Width": 1920,

"Height": 1080

},

"VideoSourceModes": {

"@token": "Camera1_VSMode1",

"@Enabled": true,

"MaxFramerate": 30.0,

"MaxResolution": {

"Width": 1920,

"Height": 1080

},

"Encodings": "H264",

"Reboot": false,

"Description": "Default Mode"

},

"ImagingSettings": {

"Brightness": 50.0,

"ColorSaturation": 50.0,

"Contrast": 50.0,

"Exposure": {

"Mode": "AUTO",

"Priority": "LowNoise",

"Window": {

"@bottom": 0.0,

"@top": 0.0,

"@left": 0.0,

"@right": 0.0

},

"MinExposureTime": 0.1,

"MaxExposureTime": 1.0,

"MinGain": 1.0,

"MaxGain": 6.0,

"MinIris": 1.0,

"MaxIris": 8.0,

"ExposureTime": 0.1,

"Gain": 1.0,

"Iris": 1.0

},

"Focus": {

"AutoFocusMode": "AUTO",

"DefaultSpeed": 0.5,

"NearLimit": 0.0,

"FarLimit": 5.0,

"Smoothing": true

},

"IrCutFilter": "AUTO",

"Sharpness": 50.0,

"WideDynamicRange": {

"Mode": "ON",

"Level": 0.0

},

"WhiteBalance": {

"Mode": "AUTO",

"CrGain": 0.0,

"CbGain": 0.0

}

},

"ImagingOptions": {

"Brightness": {

"Min": 0.0,

"Max": 100.0

},

"ColorSaturation": {

"Min": 0.0,

"Max": 100.0

},

"Contrast": {

"Min": 0.0,

"Max": 100.0

},

"Exposure": {

"Mode": [

"AUTO",

"MANUAL"

],

"Priority": [

"LowNoise",

"FrameRate"

],

"MinExposureTime": {

"Min": 0.1,

"Max": 1.0

},

"MaxExposureTime": {

"Min": 0.1,

"Max": 1.0

},

"MinGain": {

"Min": 1.0,

"Max": 6.0

},

"MaxGain": {

"Min": 1.0,

"Max": 6.0

},

"MinIris": {

"Min": 1.0,

"Max": 8.0

},

"MaxIris": {

"Min": 1.0,

"Max": 8.0

},

"ExposureTime": {

"Min": 0.1,

"Max": 1.0

},

"Gain": {

"Min": 1.0,

"Max": 6.0

},

"Iris": {

"Min": 1.0,

"Max": 8.0

}

},

"Focus": {

"AutoFocusMode": [

"AUTO",

"MANUAL"

],

"DefaultSpeed": {

"Min": 0.0,

"Max": 1.0

},

"NearLimit": {

"Min": 0.0,

"Max": 5.0

},

"FarLimit": {

"Min": 0.0,

"Max": 5.0

}

},

"IrCutFilterModes": [

"AUTO",

"ON",

"OFF"

],

"Sharpness": {

"Min": 0.0,

"Max": 100.0

},

"WideDynamicRange": {

"Mode": [

"OFF",

"ON"

],

"Level": {

"Min": 0.0,

"Max": 100.0

}

},

"WhiteBalance": {

"Mode": [

"AUTO",

"MANUAL"

],

"YrGain": {

"Min": 0.0,

"Max": 100.0

},

"YbGain": {

"Min": 0.0,

"Max": 100.0

}

}

},

"CurrentPresetToken": "imgPreset1",

"ThermalSupport": true,

"ThermalConfiguration": {

"ColorPalette": {

"@token": "palette1",

"@Type": "Rainbow",

"@Name": "RainbowPalette"

},

"Polarity": "WhiteHot",

"NUCTable": {

"@token": "nucTable1",

"@LowTemperture": -30.0,

"@HighTemperture": 50.0,

"@Name": "NUCTable1"

},

"Cooler": {

"Enabled": true,

"RunTime": 24.0

}

},

"RadiometryConfiguration": {

"RadiometryGlobalParameters": {

"ReflectedAmbientTemperature": 20.0,

"Emissivity": 0.95,

"DistanceToObject": 1.0,

"RelativeHumidity": 50.0,

"AtmosphericTemperature": 20.0,

"ExtOpticsTemperature": 20.0,

"ExtOpticsTransmittance": 0.95

}

}

},

{

"@token": "Camera2",

"Framerate": 60.0,

"Resolution": {

"Width": 1920,

"Height": 1080

},

"VideoSourceModes": {

"@token": "Camera2_VSMode1",

"@Enabled": true,

"MaxFramerate": 60.0,

"MaxResolution": {

"Width": 1920,

"Height": 1080

},

"Encodings": "H265",

"Reboot": false,

"Description": "Default Mode"

}

}

],

"VideoSourceConfigurations": [

{

"@token": "Camera1_config",

"Name": "Camera1_config",

"SourceToken": "Camera1",

"Bounds": {

"@width": 1920,

"@height": 1080,

"@x": 0,

"@y": 0

}

},

{

"@token": "Camera2_config",

"Name": "Camera2_config",

"SourceToken": "Camera2",

"Bounds": {

"@width": 1920,

"@height": 1080,

"@x": 0,

"@y": 0

}

}

],

"VideoEncoderConfigurations": [

{

"@token": "Camera1",

"@GovLength": 30,

"@AnchorFrameDistance": 1,

"@Profile": "High",

"@GuaranteedFrameRate": 30,

"Name": "Camera1_encoder",

"Encoding": "H264",

"Resolution": {

"Width": 1920,

"Height": 1080

},

"RateControl": {

"@ConstantBitRate": true,

"FrameRateLimit": 30,

"BitrateLimit": 2048

},

"Multicast": {},

"Quality": 5,

"SessionTimeout": "PT10S",

"EncodingInterval": 1

},

{

"@token": "Camera2",

"@GovLength": 30,

"@AnchorFrameDistance": 1,

"@Profile": "High",

"@GuaranteedFrameRate": 30,

"Name": "Camera2_encoder",

"Encoding": "H264",

"Resolution": {

"Width": 1280,

"Height": 720

},

"RateControl": {

"@ConstantBitRate": true,

"FrameRateLimit": 30,

"BitrateLimit": 2048

},

"Multicast": {},

"Quality": 5,

"SessionTimeout": "PT10S",

"EncodingInterval": 1

}

],

"AudioSources": [

{

"@token": "audio_camera1",

"Channels": 1

},

{

"@token": "audio_camera2",

"Channels": 2

},

{

"@token": "audio_camera3",

"Channels": 1

}

],

"AudioSourceConfigurations": [

{

"@token": "audio_camera1_config",

"Name": "AudioCamera1Config",

"SourceToken": "audio_camera1"

},

{

"@token": "audio_camera2_config",

"Name": "AudioCamera2Config",

"SourceToken": "audio_camera2"

},

{

"@token": "audio_camera3_config",

"Name": "AudioCamera3Config",

"SourceToken": "audio_camera3"

}

],

"AudioEncoderConfigurations": [

{

"@token": "audio_camera1_encoder",

"Name": "AudioCamera1Encoder",

"Encoding": "PCMU",

"Bitrate": 64,

"SampleRate": 8000,

"SessionTimeout": "PT10S",

"Multicast": {

"Address": {

"IPv4Address": "10.10.10.3"

},

"Port": 5000,

"TTL": 1,

"AutoStart": true

}

},

{

"@token": "audio_camera2_encoder",

"Name": "AudioCamera2Encoder",

"Encoding": "MP4A-LATM",

"Bitrate": 128,

"SampleRate": 44100,

"SessionTimeout": "PT10S",

"Multicast": {

"Address": {

"IPv4Address": "10.10.10.4"

},

"Port": 5001,

"TTL": 1,

"AutoStart": true

}

},

{

"@token": "audio_camera3_encoder",

"Name": "AudioCamera3Encoder",

"Encoding": "G711",

"Bitrate": 64,

"SampleRate": 8000,

"SessionTimeout": "PT10S",

"Multicast": {

"Address": {

"IPv4Address": "10.10.10.5"

},

"Port": 5002,

"TTL": 1,

"AutoStart": true

}

}

],

"AudioDecoderConfigurations": [

{

"@token": "audio_decoder",

"Name": "AudioDecoder",

"Options": {

"AACDecOptions": {

"Bitrate": [128, 256],

"SampleRateRange": [44100, 48000]

},

"G711DecOptions": {

"Bitrate": [64, 128],

"SampleRateRange": [8000, 16000]

},

"G726DecOptions": {

"Bitrate": [32, 64],

"SampleRateRange": [8000, 16000]

}

},

"Options2": [

{

"Encoding": "PCMU",

"BitrateList": [ 64, 128 ],

"SampleRateList": [ 8000, 16000 ]

},

{

"Encoding": "MP4A-LATM",

"BitrateList": [ 128, 256 ],

"SampleRateList": [ 44100, 48000 ]

},

{

"Encoding": "G726",

"BitrateList": [ 32, 64 ],

"SampleRateList": [ 8000, 16000 ]

}

]

}

],

"OSDConfigurations" :[

{

"@token": "osd1",

"VideoSourceConfigurationToken": "Camera1_config",

"Type": "Text",

"Position": {

"Type": "UpperRight"

},

"TextString": {

"Type": "Plain",

"FontSize": 20,

"FontColor": {

"@Transparent": 0,

"Color": {

"@X": 255,

"@Y": 255,

"@Z": 255

}

},

"BackgroundColor": {

"@Transparent": 50,

"Color": {

"@X": 255,

"@Y": 0,

"@Z": 0

}

},

"PlainText": "Hello World"

}

},

{

"@token": "osd2",

"VideoSourceConfigurationToken": "Camera2_config",

"Type": "Text",

"Position": {

"Type": "UpperLeft"

},

"TextString": {

"Type": "Plain",

"FontSize": 20,

"FontColor": {

"@Transparent": 0,

"Color": {

"@X": 255,

"@Y": 255,

"@Z": 255

}

},

"BackgroundColor": {

"@Transparent": 50,

"Color": {

"@X": 255,

"@Y": 0,

"@Z": 0

}

},

"PlainText": "Hello World"

}

}

],

"MetadataConfigurations": [

{

"@token": "metadata1",

"Name": "Metadata1",

"@CompressionType": "",

"@GeoLocation": false,

"@ShapePolygon": false,

"PTZStatus": {

"Status": false,

"Position": false

},

"Analytics": false,

"Multicast": {

"Address": {

"IPv4Address": "239.255.255.250"

},

"Port": 3702,

"TTL": 1,

"AutoStart": false

},

"SessionTimeout": "PT10S",

"AnalyticsEngineConfiguration": {

"AnalyticsModule": [

{

"@Name": "ObjectDetection",

"@Type": "ObjectDetection",

"Parameters": {

"SimpleItem" : [

{

"@Name": "Sensitivity",

"@Value": "0.5"

},

{

"@Name": "Threshold",

"@Value": "0.5"

}

],

"ElementItem": [

{

"@Name": "Region",

"CellLayout": {

"@Columns": 22,

"@Rows": 18,

"Transformation": {

"Translate": {

"@x": -1,

"@y": -2

},

"Scale": {

"x": 0.1,

"y": 1.1

}

}

}

}

]

}

}

]

}

}

],

"Masks": [

{

"@token": "mask1",

"ConfigurationToken": "Camera1_config",

"Type": "Color",

"Polygon": {

"Point": [

{

"@x": 0.1,

"@y": 0.2

},

{

"@x": 0.3,

"@y": 0.4

},

{

"@x": 0.5,

"@y": 0.6

}

]

},

"Color": {

"@X": 255,

"@Y": 0,

"@Z": 0

},

"Colorspace": "http://www.onvif.org/ver10/colorspace/RGB",

"Enabled": true

}

],

"VideoAnalyticsConfigurations": [

{

"@token": "analytics1",

"Name": "Analytics1",

"AnalyticsEngineConfiguration": {

"AnalyticsModule": [

{

"@Name": "MyCellMotionEngine",

"@Type": "CellMotionEngine",

"Parameters": {

"ElementItem": {

"@Name": "Layout",

"CellLayout": {

"@Columns": "22",

"@Rows": "18",

"Transformation": {

"Scale": {

"@x": "0.090909",

"@y": "0.111111"

},

"Translate": {

"@x": "-1.000000",

"@y": "-1.000000"

}

}

}

},

"SimpleItem": {

"@Name": "Sensitivity",

"@Value": "6"

}

}

},

{

"@Name": "MRD",

"@Type": "MotionRegionDetector",

"Parameters": {

"SimpleItem": {

"@Name": "Sensitivity",

"@Value": "4"

}

}

}

]

},

"RuleEngineConfiguration": {

"Rule": [

{

"@Name": "MyCellMotionDetector",

"@Type": "tt:CellMotionDetector",

"tt:Parameters": {

"tt:SimpleItem": [

{

"@Name": "MinCount",

"@Value": "5"

},

{

"@Name": "AlarmOnDelay",

"@Value": "1000"

},

{

"@Name": "AlarmOffDelay",

"@Value": "1000"

},

{

"@Name": "ActiveCells",

"@Value": "zwA="

}

]

}

},

{

"@Name": "TamperingDetection",

"@Type": "tt:TamperingDetection",

"tt:Parameters": {

"tt:SimpleItem": [

{

"@Name": "Mode",

"@Value": "SignalLoss"

},

{

"@Name": "Threshold",

"@Value": "50.0"

},

{

"@Name": "Duration",

"@Value": "PT10S"

}

]

}

}

]

}

}

],

"VideoOutputs": [

{

"@token": "video_output1",

"Layout": {

"PanelLayout": [

{

"Pane": "",

"Area": {

"@bottom": 0.12,

"@V": 0.22,

"@right": 0.33,

"@left": 0.44

}

}

]

},

"Resolution": {

"Width": 1920,

"Height": 1080

},

"RefreshRate": 60.0,

"AspectRatio": 3

}

],

"VideoOutputConfigurations": [

{

"@token": "video_output_config1",

"Name": "video_output_config1",

"OutputToken": "video_output1"

}

],

"AudioOutputs": [

{

"@token": "audio_output1"

}

],

"AudioOutputConfigurations": [

{

"@token": "audio_output_config1",

"Name": "audio_output_config1",

"OutputToken": "audio_output1",

"SendPrimacy": "Normal",

"OutputLevel": 50,

"Options": {

"OutputTokensAvailable": [

"audio_output1"

],

"SendPrimacyOptions": [

"Normal",

"High"

],

"OutputLevelRange": {

"Min": 0,

"Max": 100

}

}

}

],

"RelayOutputs": [

{

"@token": "relay_output1",

"Properties": {

"Mode": "Bistable",

"DelayTime": "PT1S",

"IdleState": "closed"

},

"Options": {

"@token": "relay_output1",

"Mode": [

"Bistable"

]

}

}

],

"DigitalInputs": [

{

"@token": "digital_input1",

"@IdleState": "closed",

"Options": {

"IdleState": [

"closed",

"open"

]

}

}

],

"SerialPorts": [

{

"@token": "serial_port1",

"Configuration": {

"@type": "RS232",

"BaudRate": 9600,

"CharacterLength": 8,

"ParityBit": "None",

"StopBits": 1

},

"Options": {

"BaudRateList": {

"Items": [9600, 115200]

},

"CharacterLengthList": {

"Items": [7, 8]

},

"ParityBitList": {

"Items": ["None", "Even", "Odd"]

},

"StopBitList": {

"Items": [1, 2]

}

}

}

],

"profile": [

{

"@token": "Camera1Stream1",

"fixed": true,

"Name": "Camera1Stream1",

"VideoSourceConfiguration": {

"@token": "Camera1_config"

},

"VideoEncoderConfiguration": {

"@token": "Camera1"

},

"AudioSourceConfiguration": {

"@token": "audio_camera1_config"

},

"AudioEncoderConfiguration": {

"@token": "audio_camera1_encoder"

},

"AudioDecoderConfiguration": {

"@token": "audio_decoder"

},

"stream_uri": {

"@append_params": 0,

"#text": ""

},

"VideoAnalyticsConfiguration": {

"@token": "analytics1"

},

"MetadataConfiguration": {

"@token": "metadata1"

},

"AudioOutputConfiguration": {

"@token": "audio_output_config1"

},

"PTZConfiguration": {

"@token": "ptz1"

},

"Presets": [

{

"@token": "preset1",

"Name": "MainPosition",

"PTZPosition": {

"PanTilt": {

"@x": 3,

"@y": 3

},

"Zoom": {

"@x": 1

}

}

},

{

"@token": "preset2",

"Name": "SecondPosition",

"PTZPosition": {

"PanTilt": {

"@x": 5,

"@y": 5

},

"Zoom": {

"@x": 2

}

}

}

],

"PresetTours": [

{

"@token": "tour1",

"Name": "Tour1",

"Status": {

"State": "Idle"

},

"AutoStart": true,

"StartingCondition": {

"@RandomPresetOrder": false,

"RecurringTime": "60",

"RecurringDuration": "PT1M30S",

"Direction": "Forward"

},

"TourSpot": [

{

"PresetDetail": {

"PresetToken": "preset1",

"Home": true,

"PTZPosition": {

"PanTilt": {

"@x": 0,

"@y": 0

},

"Zoom": {

"@x": 0

}

}

},

"Speed": {

"PanTilt": {

"@x": 0.5,

"@y": 0.5

},

"Zoom": {

"@x": 1

}

},

"StayTime": "PT2S"

}

]

}

]

},

{

"@token": "Camera2Stream1",

"fixed": false,

"Name": "Camera2Stream1",

"VideoSourceConfiguration": {

"@token": "Camera2_config"

},

"VideoEncoderConfiguration": {

"@token": "Camera2"

},

"AudioSourceConfiguration": {

"@token": "audio_camera1_config"

},

"AudioEncoderConfiguration": {

"@token": "audio_camera1_encoder"

},

"AudioDecoderConfiguration": {

"@token": "audio_decoder"

},

"stream_uri": {

"@append_params": 0,

"#text": ""

},

"PTZConfiguration": {

"@token": "ptz1"

}

}

],

"PTZNodes": [

{

"@token": "ptzNode1",

"@FixedHomePosition": true,

"@GeoMove": true,

"Name": "PTZNode1",

"SupportedPTZSpaces": {

"AbsolutePanTiltPositionSpace": {

"XRange": {

"Min": 0.0,

"Max": 360.0

},

"YRange": {

"Min": -90.0,

"Max": 90.0

}

},

"AbsoluteZoomPositionSpace": {

"XRange": {

"Min": 0.0,

"Max": 10.0

}

},

"RelativePanTiltTranslationSpace": {

"XRange": {

"Min": -2.0,

"Max": 2.0

},

"YRange": {

"Min": -2.0,

"Max": 2.0

}

},

"RelativeZoomTranslationSpace": {

"XRange": {

"Min": -1.0,

"Max": 1.0

}

},

"ContinuousPanTiltVelocitySpace": {

"XRange": {

"Min": -20.0,

"Max": 20.0

},

"YRange": {

"Min": -30.0,

"Max": 30.0

}

},

"ContinuousZoomVelocitySpace": {

"XRange": {

"Min": -15.0,

"Max": 15.0

}

},

"PanTiltSpeedSpace": {

"XRange": {

"Min": 0.0,

"Max": 4.0

}

},

"ZoomSpeedSpace": {

"XRange": {

"Min": 0.0,

"Max": 7.0

}

}

},

"MaximumNumberOfPresets": 100,

"HomeSupported": true,

"Extension": {

"SupportedPresetTour": {

"MaximumNumberOfPresetTours": 10,

"PTZPresetTourOperation": [

"Start",

"Stop",

"Pause",

"Extended"

]

}

},

"AuxiliaryCommands": [

"Wiper start",

"Wiper stop"

]

}

],

"PTZConfigurations": [

{

"@token": "ptz1",

"@MoveRamp": 1,

"@PresetRamp": 2,

"@PresetTourRamp": 3,

"Name": "PTZCfg1",

"NodeToken": "ptzNode1",

"UseCount": 0,

"DefaultPTZSpeed": {

"PanTilt": {

"@x": 0.5,

"@y": 0.5

},

"Zoom": {

"@x": 0.5

}

},

"DefaultPTZTimeout": "PT5S",

"PanTiltLimits": {

"Range": {

"XRange": {

"Min": 0,

"Max": 360

},

"YRange": {

"Min": -90,

"Max": 90

}

}

},

"ZoomLimits": {

"Range": {

"XRange": {

"Min": -9,

"Max": 9

}

}

},

"Extension": {

"PTControlDirection": {

"EFlip": {

"Mode": "ON"

},

"Reverse": {

"Mode": "AUTO"

}

}

}

}

],

"Recordings": [

{

"RecordingToken": "recording1",

"Configuration": {

"Source": {

"SourceId": "camera1_#1",

"Name": "Camera1",

"Location": "Camera1 Location",

"Description": "Camera1 Description",

"Address": "http://example.com/camera1"

},

"Content": "Video",

"MaximumRetentionTime": "P1Y"

},

"Tracks": {

"Track": [

{

"TrackToken": "track1",

"Configuration": {

"TrackType": "Video",

"Description": "Track1 Description"

}

},

{

"TrackToken": "track2",

"Configuration": {

"TrackType": "Audio",

"Description": "Track2 Description"

}

},

{

"TrackToken": "track3",

"Configuration": {

"TrackType": "Metadata",

"Description": "Track3 Description"

}

}

]

},

"EarliestRecording": "2023-01-01T00:00:00Z",

"LatestRecording": "2023-12-31T23:59:59Z",

"RecordingStatus": "Recording"

}

],

"RecordingJobs": [

{

"JobToken": "job1",

"JobConfiguration": {

"RecordingToken": "recording1",

"Mode": "Continuous",

"Priority": 1,

"Source": [

{

"SourceToken": {

"@Type": "Video",

"Token": "source1"

},

"AutoCreateReceiver": false,

"Tracks": [

{

"SourceTag": "track1",

"Destination": "destination1"

}

]

}

]

}

}

],

"replay_session_timeout": 60,

"Doors": [

{

"@token": "door1",

"Name": "MainDoor",

"Description": "Main entrance door",

"Capabilities": {

"@Access": true,

"@AccessTimingOverride": true,

"@Lock": true,

"@Unlock": true,

"@Block": true,

"@DoubleLock": true,

"@LockDown": true,

"@LockOpen": true,

"@DoorMonitor": true,

"@LockMonitor": true,

"@DoubleLockMonitor": true,

"@Alarm": true,

"@Tamper": true,

"@Fault": true

},

"DoorType": "pt:Door",

"Timings": {

"ReleaseTime": "PT1S",

"OpenTime": "PT2S",

"ExtendedReleaseTime": "PT3S",

"DelayTimeBeforeRelovk": "PT4S",

"ExtendedOpenTime": "PT5S",

"PreAlarmTime": "PT6S"

},

"DoorState": {

"DoorPhysicalState": "Closed",

"LockPhysicalState": "Locked",

"DoubleLockPhysicalState": "Unlocked",

"Alarm": "DoorForcedOpen",

"Tamper": {

"Reason": "TamperDetected",

"State": "TamperDetected"

},

"Fault": {

"Reason": "FaultDetected",

"State": "FaultDetected"

},

"DoorMode": "Locked"

}

}

],

"Areas": [

{

"@token": "area1",

"Name": "Area1",

"Description": "Main entrance area"

},

{

"@token": "area2",

"Name": "Area2",

"Description": "Parking area"

}

],

"AccessPoints": [

{

"@token": "access_point1",

"Name": "MainEntrance",

"Description": "Main entrance access point",

"AreaFrom": "area1",

"AreaTo": "area2",

"EntityType": "tdc:Door",

"Entity": "door1",

"Capabilities": {

"@DisableAccessPoint": true,

"@Duress": true,

"@AnomysAccess": true,

"@AccessTaken": true,

"@ExternalAuthorization": true,

"@IdentifierAccess": true,

"@SupportedRecognitionTypes" : "",

"@SupportedFeedbackTypes": ""

},

"AuthenticationProfileToken": "authArea1",

"Enabled": true

}

],

"Credentials": [

{

"@token": "credential1",

"Description": "Main entrance credential",

"CredentialHolderReference": "admin",

"CredentialIdentifier": [

{

"Type": {

"Name": " pt:Card",

"FormatType": "GUID"

},

"ExemptedFromAuthentication": true,

"Value": "1234567890abcdef"

}

],

"CredentialAccessProfile": [

{

"AccessProfileToken": "accessProfile1"

}

],

"Attribute": [

{

"@Name": "AccessLevel",

"@Value": "High"

},

{

"@Name": "AccessGroup",

"@Value": "Group1"

}

],

"State": {

"Enabled": true,

"Reason": "Enabled",

"AntipassbackState": {

"AntipassbackViolated": false

}

}

}

],

"CredentialWhitelists": [

{

"Identifier": {

"Type": {

"Name": "pt:Card",

"FormatType": "GUID"

},

"Value": "1234567890abcdef"

}

}

],

"CredentialBlacklists": [

{

"Identifier": {

"Type": {

"Name": "pt:Card",

"FormatType": "GUID"

},

"Value": "fedcba0987654321"

}

}

],

"AccessProfiles": [

{

"@token": "accessProfile1",

"Name": "MainEntranceAccessProfile",

"Description": "Main entrance access profile",

"AccessPolicy": {

"ScheduleToken": "schedule1",

"Entity": "access_point1"

}

}

],

"Schedules": [

{

"@token": "schedule1",

"Name": "MainEntranceSchedule",

"Description": "Main entrance schedule",

"Standard": "BEGIN:VCALENDAR\r\nBEGIN:VEVENT\r\nDTSTART:20171125T200000\r\nDTEND:20171126T020000\r\nEND:VEVENT\r\nEND:VCALENDAR"

},

{

"@token": "schedule2",

"Name": "ParkingSchedule",

"Description": "Parking schedule",

"Standard": "BEGIN:VCALENDAR\r\nBEGIN:VEVENT\r\nDTSTART:20171125T200000\r\nDTEND:20171126T020000\r\nEND:VEVENT\r\nEND:VCALENDAR",

"SpecialDays": [

{

"GroupToken": "specialDaysGroup2",

"TimeRange": [

{

"From": "2025-12-31T20:00:00Z",

"Until": "2026-01-01T02:00:00Z"

},

{

"From": "2025-12-24T20:00:00Z",

"Until": "2025-12-25T02:00:00Z"

}

]

}

]

}

],

"SpecialDayGroups": [

{

"@token": "specialDaysGroup1",

"Name": "SpecialDaysGroup1",

"Description": "Special days group 1",

"Days": "BEGIN:VCALENDAR\r\nBEGIN:VEVENT\r\nDTSTART:20251231T200000\r\nDTEND:20260101T020000\r\nEND:VEVENT\r\nBEGIN:VEVENT\r\nDTSTART:20251224T200000\r\nDTEND:20251225T020000\r\nEND:VEVENT\r\nEND:VCALENDAR"

},

{

"@token": "specialDaysGroup2",

"Name": "SpecialDaysGroup2",

"Description": "Special days group 2"

}

],

"Receivers": [

{

"Token": "receiver1",

"Configuration": {

"Mode": "AutoConnect",

"MediaUri": "rtsp://example.com/stream1",

"StreamSetup": {

"Stream": "RTP-Unicast",

"Transport": {

"Protocol": "RTSP"

}

}

}

},

{

"Token": "receiver2",

"Configuration": {

"Mode": "AlwaysConnect",

"MediaUri": "http://example.com/stream2",

"StreamSetup": {

"Stream": "RTP-Multicast",

"Transport": {

"Protocol": "HTTP"

}

}

}

}

],

"IPAddressFilter": {

"Type": "Allow",

"IPv4Address": [

{

"Address": "192.168.1.1",

"PrefixLength": 32

},

{

"Address": "192.168.1.5",

"PrefixLength": 32

}

]

},

"scope": [

"onvif://www.onvif.org/location/country/UK",

"onvif://www.onvif.org/type/video_encoder",

"onvif://www.onvif.org/name/IP-Camera",

"onvif://www.onvif.org/hardware/Custom"

],

"event": {

"renew_interval": 1,

"simulate_enable": 0

}

}

]

}

}

The provided JSON string configures the ONVIF® server with a single camera, a single stream, and one PTZ controller. This configuration can be used as a base template to create your ONVIF® configuration with different cameras and streams.

In order to add a new camera, the following steps should be performed:

- Add a new entry to the

VideoSourcesarray with the camera token, resolution, frame rate, and imaging settings. - Add a new entry to the

VideoSourceConfigurationsarray with the camera token and bounds. - Add a new entry to the

VideoEncoderConfigurationsarray with the camera token, encoding settings, and resolution. - Add a new entry to the

profilearray with the camera token, video source configuration, and video encoder configuration.

Callback mechanism

The OServer class provides a callback mechanism to handle ONVIF® requests. The callback function should match the following signature:

ONVIF_RET onvif_global_handler(ONVIF_DEVICE *p_device, ONVIF_MSG_TYPE msg_type, ...);

The callback function will be called for each ONVIF® request received by the server. The msg_type parameter indicates the type of request, and the variable arguments can be used to pass additional data related to the request.

// Example callback function

ONVIF_RET onvif_cb(ONVIF_DEVICE *p_device, ONVIF_MSG_TYPE msg_type, ...)

{

va_list args;

va_start(args, msg_type);

// Handle the ONVIF® request based on the msg_type

switch (msg_type)

{

case GetImagingSettings:

// Handle GetImagingSettings request

auto vsl = va_arg(args, VideoSourceList *);

auto req = va_arg(args, img_GetImagingSettings_REQ *);

// Use vsl and req to get the imaging settings for the requested video source

break;

case SetImagingSettings:

// Handle SetImagingSettings request

auto vsl = va_arg(args, VideoSourceList *);

auto req = va_arg(args, img_SetImagingSettings_REQ *);

// Use vsl and req to set the imaging settings for the requested video source

break;

// Add more cases for other message types

}

va_end(args);

return ONVIF_OK;

}

For requests that require additional parameters (which can be information about the request or response), the callback function can use va_arg to retrieve the parameters from the variable argument list. The parameters are defined in the ONVIF® specification for each message type. For example, for the SetImagingSettings message type, the parameters can include a pointer to the VideoSourceList and a pointer to the img_SetImagingSettings_REQ structure.

case SetImagingSettings:

{

auto vsl = va_arg(args, VideoSourceList *);

auto req = va_arg(args, img_SetImagingSettings_REQ *);

}

The req parameter will contain the request data that the ONVIF® client sent, so you can use it to apply settings to your camera. For example:

yourCameraBrightness = req->ImagingSettings.Brightness;

yourCameraContrast = req->ImagingSettings.Contrast;

yourCameraColorSaturation = req->ImagingSettings.ColorSaturation;

Requests that require a response instead of an action also work in the same way. For example, for the GetImagingSettings message type, the parameters can include a pointer to the VideoSourceList and a pointer to the img_GetImagingSettings_REQ structure. The response can be set by modifying the ImagingSettings field of the VideoSourceList.

case GetImagingSettings:

{

auto vsl = va_arg(args, VideoSourceList *);

auto req = va_arg(args, img_GetImagingSettings_REQ *);

}

This time, the req parameter will contain data related to the request, for example, the camera token. You can use this token to determine which camera the ONVIF® client requested settings for. After that, you can set the response data to vsl->ImagingSettings. For example:

vsl->ImagingSettings.Brightness = 25.0f; // Example value, replace with actual camera settings.

vsl->ImagingSettings.Contrast = 50.0f; // Example value, replace with actual camera settings.

vsl->ImagingSettings.ColorSaturation = 75.0f; // Example value, replace with actual camera settings.

The callback function should return an ONVIF_RET value indicating the result of the operation. The possible return values are defined in the ONVIF® specification and include ONVIF_OK, ONVIF_ERR_InvalidToken, and other error codes. Please refer to the /src/OnvifMessageTypes.h file for the full list of message types, their parameters, and return values.

The /complianceTestTemplate/ServerCallback.cpp file contains an example of the callback function that handles ONVIF® requests to provide Profile S and Profile T support. Please note that ONVIF profile compliance requires additional features, such as real video streaming, PTZ control, an HTTP server for system restore, and RTSP over HTTP tunneling. These features are implemented in the Compliance Test Template application and are not part of the OServer library functionality. The callback function in the Compliance Test Template handles ONVIF® requests related to video streaming, PTZ control, and other profile-specific features.

Simple example

The application below shows a simple usage of OServer. The application creates an OServer object and initializes it with a simple callback.

#include <iostream>

#include <string>

#include <thread>

#include <chrono>

#include "OnvifImage.h"

#include "OServer.h"

#include "OnvifGlobalHandler.h"

#include "DefaultConfigContent.h"

/// ONVIF® callback function. This function handles ONVIF® messages.

ONVIF_RET onvifCallback(ONVIF_DEVICE *p_device, ONVIF_MSG_TYPE msg_type, ...)

{

va_list args;

va_start(args, msg_type);

// Unless the request is explicitly rejected, it's better to return ONVIF_OK

// for many requests for test tool compatibility.

ONVIF_RET ret = ONVIF_OK;

// Check the message type and handle it accordingly.

switch (msg_type)

{

// Handle SetImagingSettings request.

case SetImagingSettings:

{

auto vsl = va_arg(args, VideoSourceList *);

auto req = va_arg(args, img_SetImagingSettings_REQ *);

// Get token. Camera token is defined in config file.

std::string cameraName(vsl->VideoSource.token);

// Some logic depends on camera name if we have multiple cameras.

// Apply these settings to your camera.

// Example: yourCamera.setBrightness(req->ImagingSettings.Brightness);

// Example: yourCamera.setContrast(req->ImagingSettings.Contrast);

// Example: yourCamera.setColorSaturation(req->ImagingSettings.ColorSaturation);

break;

}

// Handle GetImagingSettings request.

case GetImagingSettings:

{

auto vsl = va_arg(args, VideoSourceList *);

auto req = va_arg(args, img_GetImagingSettings_REQ *);

// Get token. Camera token is defined in config file.

std::string cameraName(vsl->VideoSource.token);

// Some logic depends on camera name if we have multiple cameras.

// Fill image settings.

auto imageSettings = &vsl->ImagingSettings;

imageSettings->Brightness = 25.0f; // Example value.

imageSettings->Contrast = 50.0f; // Example value.

imageSettings->ColorSaturation = 75.0f; // Example value.

break;

}

// ... Other cases for handling different ONVIF® messages ...

}

va_end(args);

// Return the result of the operation.

return ret;

}

/// Entry point.

int main(int argc, char **argv)

{

// Create ONVIF® server object.

cr::onvif::OServer oServer;

// Initialize ONVIF® server with the JSON configuration and callback function.

if (!oServer.init(g_defaultConfigContent, onvifCallback))

return -1;

// Start ONVIF® server.

if (!oServer.start())

return -1;

// Wait key press to stop the server.

std::cout << "ONVIF® server started. Press Enter to stop..." << std::endl;

std::cin.get();

oServer.stop();

return 0;

}

Compliance Test Template

The Compliance Test Template is a ready-to-build application that demonstrates how to use the OServer library to create a working ONVIF server with real RTSP video streaming, PTZ control, snapshot serving, and event handling. It is designed to help developers implement the features required for Profile S and Profile T conformance testing. The template is located in the complianceTestTemplate folder.

Note: Use of this template does not, by itself, imply ONVIF® conformance. Claiming ONVIF® conformance for a product requires completion of ONVIF’s official conformance process and a valid Declaration of Conformance (DoC).

What the Template Does

When launched, the Compliance Test Template application performs the following:

- Initializes two RTSP video streamers (one per camera) using VStreamerMediaMtx with MediaMTX as the backend. Each streamer generates a synthetic video frame showing PTZ position, imaging settings, and camera name overlays.

- Starts an HTTP server (on port

8020) for serving JPEG snapshots and handling system restore requests (required by Profile S). - Loads or creates the JSON configuration file (

OServer.json), dynamically injecting camera stream configurations. - Initializes and starts the OServer instance with the configuration and a comprehensive callback function.

- Launches HAProxy as a reverse proxy (on port

80) to unify ONVIF services and RTSP-HTTP tunneled streams behind a single port (required by Profile T). - Runs a main loop that continuously generates video frames with PTZ position visualization and feeds them to the RTSP streamers.

Template File Structure

| File | Description |

|---|---|

| main.cpp | Application entry point. Sets up video streamers, HTTP server, ONVIF server, and HAProxy. Contains the main loop that generates and sends video frames. |

| ServerCallback.cpp | Complete ONVIF callback implementation handling ~30 message types including imaging, media, PTZ, events, snapshots, network, and system operations. This is the primary reference for implementing your own callback. |

| Config.h | Global constants (RTSP port, number of cameras, HTTP port) and shared state variables (camera settings, streamer parameters, PTZ emulator, snapshot data). |

| Ptz.h / Ptz.cpp | PTZ emulator class that simulates pan/tilt/zoom camera movements with continuous motion, absolute/relative positioning, preset save/recall, and home position support. |

| Utils.h / Utils.cpp | Utility functions: IP detection (getHostIp), JSON config loading, stream configuration injection (addStreamToOnvifServerConfig), and value range mapping. |

| DefaultConfigContent.h | Embedded default JSON configuration string used when OServer.json does not exist on disk. |

| haproxyConfigContent.h | Embedded HAProxy configuration template for routing ONVIF and RTSP-HTTP requests through port 80. |

| Example2CameraOnvifConfig.json | Example JSON configuration file for 2 cameras configured with video sources, encoders, and profiles. This file is not used but serves as a an example of how to structure the JSON configuration for multiple cameras. |

| images/ | JPEG snapshot images (camera_1.jpg, camera_2.jpg) served when ONVIF clients request snapshots via GetSnapshotUri. |

| 3rdparty/ | Third-party dependencies: VStreamerMediaMtx (RTSP server), CppHttpLib (HTTP server), and VCodecGst (GStreamer video codec). |

Dependencies and Installation

The Compliance Test Template has additional dependencies beyond the core OServer library (which only requires openssl and zlib). Install all required packages with:

# OpenCV — used for drawing PTZ overlays on generated video frames

sudo apt install libopencv-dev

# GStreamer — required by VCodecGst for video encoding

sudo apt install libgstreamer1.0-dev libgstreamer-plugins-base1.0-dev \

libgstreamer-plugins-bad1.0-dev gstreamer1.0-plugins-base \

gstreamer1.0-plugins-good gstreamer1.0-plugins-bad \

gstreamer1.0-plugins-ugly gstreamer1.0-libav

# HAProxy — reverse proxy required for Profile T (RTSP-HTTP tunneling on port 80)

sudo apt install haproxy

Table 2 — Template dependency summary.

| Dependency | Purpose | Source |

|---|---|---|

| VStreamerMediaMtx | RTSP video server (wraps MediaMTX) | Included in 3rdparty/ |

| CppHttpLib | HTTP server for system restore and snapshots | Included in 3rdparty/ (v3.14.0, MIT license) |

| VCodecGst | GStreamer-based video codec | Included in 3rdparty/ |

| OpenCV | Video frame generation and PTZ overlay drawing | System package (libopencv-dev) |

| GStreamer | Low-level video encoding pipeline | System packages (see above) |

| HAProxy | Reverse proxy for single-port access | System package (haproxy) |

Building and Running

Build the template as part of the OServer project:

cd OServer

mkdir build && cd build

cmake ..

make

The compiled binary will be located in build/bin/. Before running, ensure the following files are in the same directory as the binary:

- images/ — Folder with snapshot JPEG images (

camera_1.jpg,camera_2.jpg)

Run the application:

cd build/bin

sudo ./ComplianceTestTemplate

On first launch, the application creates a default OServer.json configuration file from the embedded template. Subsequent launches will use the existing file, allowing you to customize the configuration.

Configuration

The template uses an OServer.json file for ONVIF server configuration. Key settings are described below.

| Parameter | Value | Effect |

|---|---|---|

| interface_ip | interface IP | User should set this to the IP address of the network interface they want the server to be accessible on. |

| interface_port | 80 | Server advertises port 80 (HAProxy) in URLs |

| server_ip | "127.0.0.1" | Server uses this IP for internal binding, but advertises interface_ip in URLs |

| http_port | 10005 | Internal port for ONVIF HTTP server (proxied by HAProxy) |

Adding Cameras Programmatically

Camera configurations are injected into the JSON at runtime using the addStreamToOnvifServerConfig() utility function. This function creates complete entries for VideoSources, VideoSourceConfigurations, VideoEncoderConfigurations, and profile arrays. In the template, two cameras are added:

addStreamToOnvifServerConfig(oServerConfig, "Camera1");

addStreamToOnvifServerConfig(oServerConfig, "Camera2");

The stream name follows the convention {CameraName}Stream1 (e.g., Camera1Stream1, Camera2Stream1).

Default Ports

| Port | Service |

|---|---|

| 80 | HAProxy frontend (single entry point for ONVIF + RTSP-HTTP) |

| 2345 | RTSP server (MediaMTX) |

| 8020 | HTTP server (snapshots + system restore) |

| 10005 | ONVIF HTTP server (internal, proxied through HAProxy) |

Callback Implementation

The ServerCallback.cpp file provides a complete callback implementation that handles all ONVIF message types required for Profile S and Profile T. This is the most important reference file for developers building their own ONVIF server.

The callback handles the following categories of messages:

Imaging — SetImagingSettings, GetImagingSettings, MoveFocus, GetImagingStatus, GetMoveOptions

Media (Profile S) — SetVideoEncoderConfiguration, GetVideoEncoderConfigurationOptions, GetStreamUri, GetSnapshot, GetSnapshotUri, StartMulticastStreaming, StopMulticastStreaming

Media2 (Profile T) — GetStreamUri2, SetMedia2VideoEncoderConfiguration, SetMedia2MetadataConfiguration, GetSnapshotUri2

PTZ — PtzGetStatus, PtzContinuousMove, PtzStop, PtzAbsoluteMove, PtzRelativeMove, PtzSetPreset, PtzGoToPreset, PtzGotoHomePosition

Events — Subscribe, CreatePullPointSubscription, SetEventSynchronizationPoint

Device / Network — SystemReboot, GetHostnameFromDHCP, GetDNSServerFromDHCP, GetNTPServerFromDHCP, GetIpFromDHCP, SetNetworkInterfaces, GetNetworkInterfaces, StartSystemRestore, SetRelayOutputState

Each case in the callback switch statement demonstrates how to extract request parameters using va_arg, determine which camera is being addressed via token matching, apply or return settings, and return appropriate ONVIF_RET codes (e.g., ONVIF_OK, ONVIF_ERR_InvalidToken).

PTZ Emulator

The Ptz class simulates a physical PTZ camera controller. It runs a background thread that smoothly transitions between positions, making it suitable for testing PTZ-related ONVIF commands.

Table 3 — PTZ emulator parameters.

| Parameter | Range | Description |

|---|---|---|

| Pan | 0° to 360° | Horizontal rotation (shared across cameras) |

| Tilt | -90° to 90° | Vertical rotation (shared across cameras) |

| Zoom (per camera) | 1x to 10x | Independent zoom level for each camera |

The emulator supports the following operations:

- Continuous movement — Pan/tilt/zoom at a specified speed until a stop command is received.

- Absolute positioning — Move directly to a specified pan, tilt, or zoom position.

- Relative movement — Move by a relative offset from the current position.

- Presets — Save the current position as a named preset and recall it later.

- Home position — Return to a predefined home position.

In the main loop, the current PTZ position is rendered as a moving colored rectangle on the generated video frames, providing visual feedback of the emulated camera movement.

Snapshot Support

The template serves JPEG snapshot images via its HTTP server. At startup, it loads image files from the images/ directory:

images/camera_1.jpg— Snapshot for Camera 1images/camera_2.jpg— Snapshot for Camera 2

When an ONVIF client sends a GetSnapshotUri or GetSnapshotUri2 request, the callback returns a URL pointing to the HTTP server (e.g., http://{host}:8020/Camera1Stream1). The HTTP server then serves the corresponding JPEG image. Place your own snapshot images in these paths to customize the served content.

Profile S Support

Profile S requires RTSP video streaming, snapshot access, and system restore functionality. The template implements these through three components:

-

RTSP Streaming (VStreamerMediaMtx + MediaMTX): Each camera gets its own RTSP stream accessible at

rtsp://{host}:2345/{CameraName}Stream1. The streams are configured with H.264 encoding at 1280x720 resolution and 30 FPS by default. Stream parameters (codec, resolution, bitrate, GOP size) can be changed dynamically through ONVIFSetVideoEncoderConfigurationrequests. -

Snapshot HTTP Server (CppHttpLib): An HTTP server on port

8020serves JPEG images for snapshot requests and provides a/restoreendpoint for the ONVIF system restore feature. The/restoreendpoint acceptsapplication/octet-streamPOST requests — the template returns a200 OKresponse, but you should implement your actual restore logic in this handler. -

System Restore: When the ONVIF client calls

StartSystemRestore, the callback returns the restore URL (http://{host}:8020/restore). The client then uploads the restore data to this URL.

Profile T Support

Profile T adds requirements for RTSP-HTTP tunneling and single-port access. The template uses HAProxy as a reverse proxy to meet these requirements automatically. RTSP-HTTP capable video server is required for Profile T, and the template application uses VStreamerMediaMtx with the appropriate configuration to support RTSP-HTTP tunneling.

Main challenge of profile T is having both ONVIF services and RTSP-HTTP tunneled streams accessible through the same port (port 80). This is can be achived by using reverse proxy to route requests based on URL path. The template includes an embedded HAProxy configuration that routes ONVIF service requests to the internal ONVIF server port (10005) and RTSP-HTTP tunneled stream requests to the RTSP server port (2345). This allows clients to access all services through a single port, as required by Profile T.

How It Works

At startup the application:

- Generates

haproxy.cfgfrom the embedded template inhaproxyConfigContent.h. - Launches HAProxy as a child process bound to port

80.

HAProxy routes incoming requests based on URL path:

| URL Path | Routed To | Purpose |

|---|---|---|

/onvif/* | 127.0.0.1:10005 | ONVIF service requests |

/event_service/* | 127.0.0.1:10005 | ONVIF event subscription endpoints |

/SystemLog, /AccessLog, /SupportInfo, /SystemBackup | 127.0.0.1:10005 | ONVIF system information endpoints |

/Camera*Stream* | 127.0.0.1:2345 | RTSP-HTTP tunneled video streams |

This allows ONVIF clients to access both ONVIF services and RTSP-HTTP tunneled video streams through a single port (80), which is a Profile T requirement.

Interface IP and Port Configuration

The interface_ip and interface_port parameters in the JSON configuration control what the ONVIF server advertises in its service URLs:

| Parameter | Value | Effect |

|---|---|---|

| interface_ip | "192.168.1.100" | Server uses this specific IP in all advertised URLs |

| interface_port | 80 | Server advertises port 80 (HAProxy) in URLs |

| server_ip | "127.0.0.1" | Server uses this IP for internal binding, but advertises interface_ip in URLs |

| http_port | 10005 | Internal port for ONVIF HTTP server (proxied by HAProxy) |

If profile T is not required, OServer can be started directly on the desired network interface and port without HAProxy. Following table shows the example :

| Parameter | Value | Effect |

|---|---|---|

| interface_ip | "" (empty) | Server will use server_ip parameter for advertised URLs. |

| interface_port | -1 | Server uses http_port value for advertised URLs. |

| server_ip | "127.0.0.1" | Server uses this IP for internal binding and advertises it in URLs. |

| http_port | 10005 | Real port for both internal binding and advertised URLs. |

Running ONVIF Conformance Tests

The Compliance Test Template is intended to be exercised with the ONVIF Device Test Tool running on a separate host. Several environmental requirements must be met before the test suite passes — these are part of how Profile S/T conformance is defined and are not bugs in the template or in OServer.

RTSP must require digest authentication (Profile T / Profile M)

ONVIF Core specification § 5.2.2 mandates that Profile T and Profile M devices require HTTP Digest authentication on RTSP. In practice the RTSP server must respond with RTSP/1.0 401 Unauthorized and a WWW-Authenticate: Digest … challenge to an unauthenticated DESCRIBE request. If the RTSP server allows anonymous access, the test tool reports “Digest authentication is mandatory for Profile T and Profile M” and every MEDIA2_RTSS-* test fails.

Configure your RTSP server with explicit user credentials, not a wildcard any user with empty password. For MediaMTX:

authMethod: internal

rtspAuthMethods: [digest]

authInternalUsers:

# Real auth for external RTSP clients (test tool, NVR)

- user: admin

pass: admin

ips: []

permissions:

- action: read

- action: playback

# Local publishers (e.g., the streaming application) without password

- user: any

pass:

ips: ['127.0.0.1', '::1']

permissions:

- action: publish

Profile S alone requires digest only “when authentication is necessary”, so anonymous RTSP is permitted for Profile S-only devices.

Test tool must use Administrator-level credentials

The Profile T suite executes administrative operations such as SetSystemDateAndTime, CreateUsers, DeleteUsers, SetUser, SetDiscoveryMode and SetScopes. OServer’s auth handler restricts these to users with UserLevel = Administrator. Configure the ONVIF Device Test Tool with an Administrator account from the device user list (the template default is admin / admin). Running tests with a User-level account causes admin-only tests to fail — the device returns ter:NotAuthorized as HTTP 400 (handled correctly since 3.1.6), but the operation itself is rejected by design.

Notification listener firewall on the test host

For the BASIC NOTIFICATION INTERFACE tests (EVENT-2-1-*), the test tool spins up an HTTP listener on a dynamically chosen port (a different port per test run). The device makes an outgoing TCP connection from the OServer process to that listener to deliver Notify callbacks. If the firewall on the test host blocks inbound connections from the device, the device side observes a TCP connect timeout and the tool reports “No notification received!”.

Either:

- Allow the ONVIF Device Test Tool process through the test-host firewall (Windows: add an inbound rule for the executable so any port is permitted), or

- Disable the firewall on the test interface for the duration of conformance testing.

This is a host-network configuration matter; OServer cannot work around it from the device side.

Test application

The OServer library provides a test application located in the test folder. The test application demonstrates the usage of the OServer library and provides examples of how to use the library’s features. The test application includes all features of the OServer library but does not provide any RTSP streams.

To run the test application, the /test/onvif.json file should be located in the same folder as the test application executable. This file contains the configuration for the ONVIF® server, including device information, supported services, and capabilities.

Dependencies

The OServer library has dependencies on openssl and zlib. To install these dependencies using a package manager, you can use the following commands:

sudo apt install libssl-dev zlib1g-dev

Build and connect to your project

Typical commands to build OServer library:

cd OServer

mkdir build

cd build

cmake ..

make

If you want to connect the OServer library to your CMake project as source code, you can follow these steps. For example, if your repository has the following structure:

CMakeLists.txt

src

CMakeList.txt

yourLib.h

yourLib.cpp

Create a 3rdparty folder in your repository and place the OServer repository folder there. Your repository’s new structure will be:

CMakeLists.txt

src

CMakeLists.txt

yourLib.h

yourLib.cpp

3rdparty

OServer

Create a CMakeLists.txt file in the 3rdparty folder. The CMakeLists.txt should contain:

cmake_minimum_required(VERSION 3.13)

################################################################################

## 3RD-PARTY

## dependencies for the project

################################################################################

project(3rdparty LANGUAGES CXX)

################################################################################

## SETTINGS

## basic 3rd-party settings before use

################################################################################

# To inherit the top-level architecture when the project is used as a submodule.

SET(PARENT ${PARENT}_YOUR_PROJECT_3RDPARTY)

# Disable self-overwriting of parameters inside included subdirectories.

SET(${PARENT}_SUBMODULE_CACHE_OVERWRITE OFF CACHE BOOL "" FORCE)

################################################################################

## CONFIGURATION

## 3rd-party submodules configuration

################################################################################

SET(${PARENT}_SUBMODULE_OSERVER ON CACHE BOOL "" FORCE)

if (${PARENT}_SUBMODULE_OSERVER)

SET(${PARENT}_OSERVER ON CACHE BOOL "" FORCE)

endif()

################################################################################

## INCLUDING SUBDIRECTORIES

## Adding subdirectories according to the 3rd-party configuration

################################################################################

if (${PARENT}_SUBMODULE_OSERVER)

add_subdirectory(OServer)

endif()

The OServer default configuration supports Profile S mandatory and optional features. To enable or disable other features, the following flags can be used in the CMakeLists.txt file:

# Default configuration supports Profile S.

SET(ONVIF_HTTPD_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_MEDIA_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_IMAGE_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_DEVICE_IO_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_MEDIA2_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_PTZ_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_IP_FILTER_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_PROFILE_T_SUPPORT ON CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_MPEG4_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_PROFILE_G_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_PROFILE_C_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_CREDENTIAL_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_ACCESS_RULES_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_SCHEDULE_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_AUDIO_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_VIDEO_ANALYTICS_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_THERMAL_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_RECEIVER_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_STORAGE_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_PROVISIONING_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_GEOLOCATION_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

SET(ONVIF_DOT11_SUPPORT OFF CACHE BOOL "" ${REWRITE_FORCE})

The 3rdparty/CMakeLists.txt file adds the OServer folder to your project and excludes the test application and examples from compilation (by default, the test application and examples are excluded from compilation when OServer is included as a sub-repository). Your repository’s new structure will be:

CMakeLists.txt

src

CMakeList.txt

yourLib.h

yourLib.cpp

3rdparty

CMakeLists.txt

OServer

Next, you need to include the 3rdparty folder in the main CMakeLists.txt file of your repository. Add the following line at the end of your main CMakeLists.txt:

add_subdirectory(3rdparty)

Next, you have to include the OServer library in your src/CMakeLists.txt file:

target_link_libraries(${PROJECT_NAME} OServer)

Done!